Introduction

The same AI technology that enables organizations to detect threats in seconds is now being weaponized by attackers to craft more sophisticated campaigns at machine speed. In 2025, AI-enabled adversary attacks surged by 89% year-over-year, driving average breakout times down to just 29 minutes—a 65% increase in speed from the previous year. That gap—between how fast AI defends and how fast it attacks—is where most businesses are currently exposed.

This post covers what AI security actually means, the specific risks it introduces, the principles that should guide its deployment, and the concrete benefits it delivers—particularly for growing businesses that don't have a large security team behind them.

TLDR

- AI security covers two directions: using AI to strengthen defenses and protecting AI systems from being exploited

- Unique risks include adversarial attacks, data poisoning, and model manipulation

- Attackers now use AI to craft phishing campaigns, generate malware, and time attacks with alarming precision

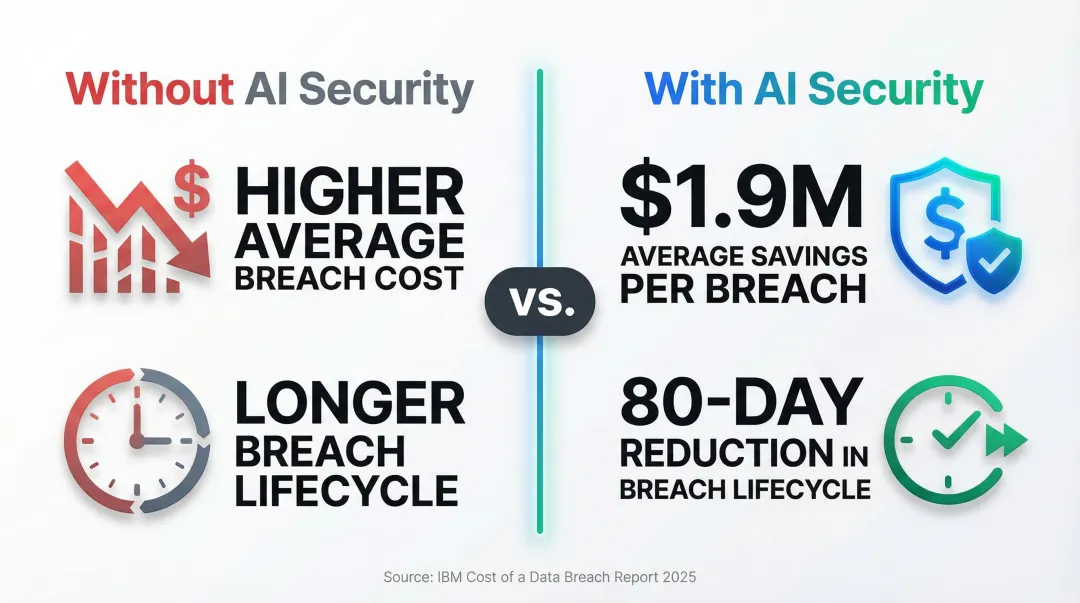

- Organizations using AI extensively save $1.9 million per breach and reduce breach lifecycles by 80 days

- Effective AI security depends on data governance, model transparency, and ongoing behavioral monitoring

What Is AI Security?

AI security has two distinct but interconnected definitions. First, it's the application of artificial intelligence to enhance cybersecurity defenses—automating threat detection, behavioral analysis, and anomaly identification across networks, endpoints, and cloud environments. Second, it's the practice of securing AI systems themselves from misuse, exploitation, and attack. Both definitions matter because modern businesses face threats on both fronts.

Most AI security tools use machine learning and deep learning to analyze massive datasets—network traffic, user behavior, login patterns—and establish a baseline of "normal" activity. The system then automatically flags deviations as potential threats.

For example, if a user who typically logs in from London suddenly authenticates from Lagos at 3 AM, AI-driven behavioral analytics immediately flags the session as high-risk. The system can trigger automated investigation or block access before any damage occurs.

The second definition—securing AI deployments—has become critical as generative AI and large language models embed themselves in business operations. According to the 2025 IBM Cost of a Data Breach Report, 63% of breached organizations either lack an AI governance policy or are still developing one. Of the 13% of organizations that reported breaches involving AI models or applications, 97% lacked proper AI access controls.

How AI Has Evolved in Cybersecurity

Traditional security tools like SIEM platforms and firewalls generate more data than human analysts can process manually. The average enterprise generates roughly 450,000 alerts per year, and over 60% of alerts go unreviewed due to capacity constraints. AI stepped in to analyze this data at scale—spotting patterns, reducing alert fatigue, and enabling faster triage. Machine learning models improve over time by continuously learning from new data, becoming more accurate at distinguishing genuine threats from false positives.

According to the 2025 SANS Detection & Response Survey, 73% of organizations list false positives as their number one challenge in threat detection. AI-driven behavioral analytics address this by reducing false positives by up to 60% compared to traditional rule-based approaches.

How Cybercriminals Are Weaponizing AI

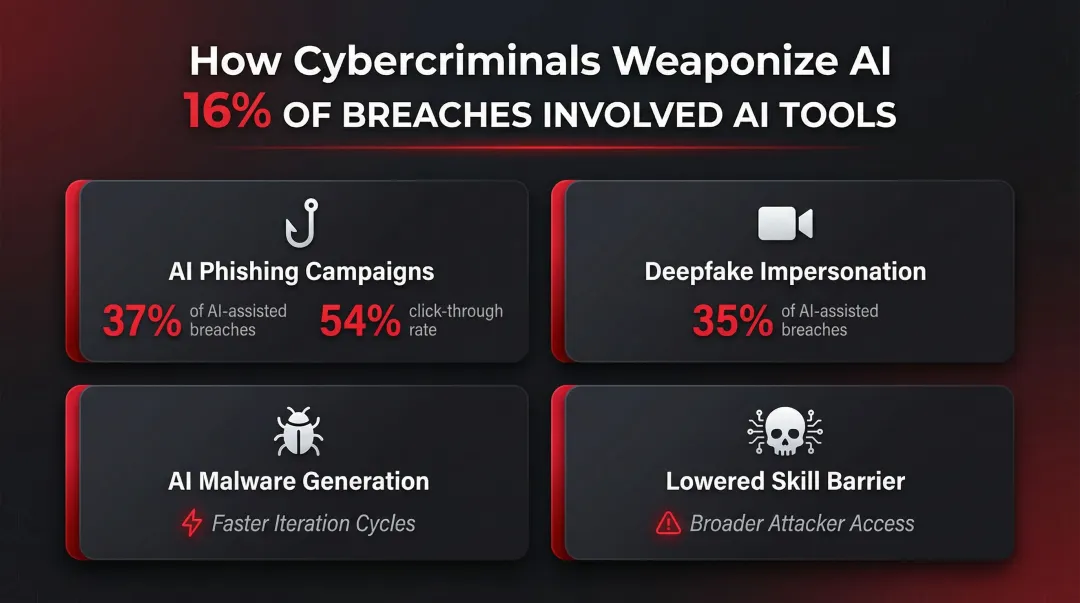

Threat actors are already exploiting mainstream AI tools and open-source models stripped of ethical safeguards. Common attack methods include:

- Automating phishing campaigns at scale with personalized lures

- Generating convincing deepfakes for voice and video impersonation

- Building and iterating malware faster than traditional security can respond

- Lowering the technical barrier so less-skilled attackers can launch sophisticated campaigns

IBM's 2025 data shows that 16% of all breaches studied involved attackers using AI tools, primarily for AI-generated phishing (37%) and deepfake impersonation attacks (35%). LLM-generated phishing emails achieve a 54% click-through rate—compared to just 12% for traditional phishing.

AI Security Risks and Vulnerabilities

AI introduces unique risks distinct from traditional cybersecurity threats. Understanding these vulnerabilities is essential before deploying or relying on AI systems.

Adversarial Attacks

Adversarial attacks involve threat actors crafting deceptive inputs to fool AI models into incorrect predictions or classifications. Attackers can bypass fraud detection systems or evade malware scanners by subtly modifying malicious code — changes imperceptible to humans but effective at confusing AI classifiers.

A 2023 study published at the USENIX Security Symposium demonstrated successful evasion attacks against raw-binary malware classifiers, with state-of-the-art attacks achieving 90% success rates. However, by applying adversarial training, researchers reduced the attack success rate from 90% to just 5%.

Prompt injection represents a variant where malicious prompts trick large language models into leaking data or taking harmful actions—a growing concern as businesses integrate LLMs into customer service, internal knowledge bases, and automated decision workflows.

Data Poisoning

Data poisoning occurs when attackers corrupt an AI model's training data to degrade performance, introduce biases, or create backdoors. A poisoned security model can systematically miss the exact attack types it was built to detect. Rigorous data validation and anomaly detection on training pipelines are the primary defenses.

The MITRE ATLAS framework documents real-world supply chain poisoning incidents, including the "PoisonGPT" case where researchers surgically modified an open-source GPT-J model to spread misinformation and successfully uploaded it to Hugging Face, a major public model hub. MITRE ATLAS also notes instances of malicious models hosted on Hugging Face that were purposefully corrupted to execute reverse shells and deploy malware upon deserialization.

AI Model Theft and Manipulation

AI models themselves can be targeted—reverse engineered, stolen, or manipulated at the level of model weights or parameters. Attackers who understand a model's behavior can exploit its blind spots to evade detection. Model drift adds another layer of exposure: models that degrade over time become exploitable if not monitored and updated regularly.

Data Privacy and Bias Risks

AI models require large training datasets, introducing data exposure and privacy risks. Biased training data produces biased outputs—a serious concern in security tools that may systematically fail to detect threats associated with certain user profiles or behaviors.

Regulatory exposure adds further pressure. The EU AI Act (Regulation (EU) 2024/1689) explicitly states that an AI system "shall always be considered to be high-risk where the AI system performs profiling of natural persons".

Enterprise security tools using AI for behavioral analytics or insider threat detection must assess whether their functions constitute "profiling" — a designation that triggers stringent compliance, transparency, and registration obligations.

Supply Chain and Shadow AI Risks

Supply chain attacks target third-party AI components or software libraries used in AI development. Shadow AI—the unsanctioned use of AI tools by employees—exposes sensitive organizational data through unmanaged channels.

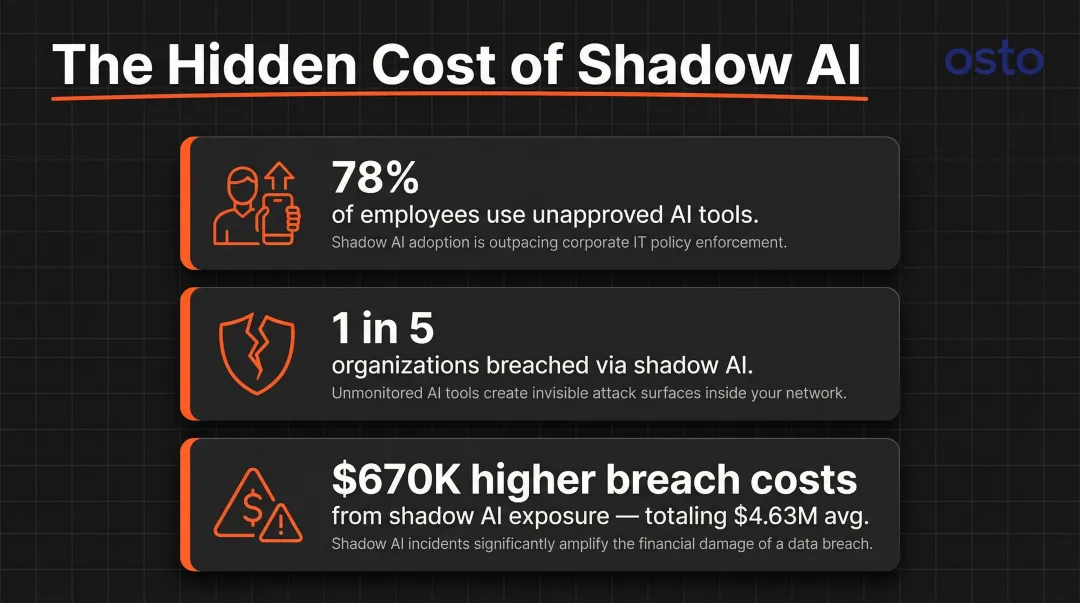

The scale of shadow AI exposure is significant:

- A 2025 WalkMe survey found 78% of employees admit to using AI tools not approved by their employer

- IBM reports one in five organizations suffered a breach due to shadow AI

- Organizations with high shadow AI usage faced $670,000 in higher breach costs, pushing totals to $4.63 million compared to standard breaches

How AI Is Used in Cybersecurity

Organizations deploy AI to strengthen defenses across security domains, delivering measurable improvements in detection speed, accuracy, and response capabilities.

Threat Detection and Behavioral Analytics

AI continuously monitors network traffic, user activity, and endpoint behavior to identify anomalies in real time. Behavioral analytics builds a profile of normal user behavior and flags deviations—unusual data access, off-hours logins, unexpected outbound connections—that may signal account compromise or insider threats. This approach catches threats that signature-based tools miss entirely.

Traditional signature-based detection relies on known hashes and rules, making it blind to zero-day exploits and compromised credential attacks. According to Vectra, 79% of detections in 2024 were malware-free, relying on stolen credentials or living-off-the-land techniques that render signature-based tools ineffective.

That gap matters operationally: machine learning-based anomaly detection systems reduce false positives by up to 60% compared to rule-based approaches—a significant improvement given that 73% of SOCs cite false positives as their top challenge.

Vulnerability Management and Automated Response

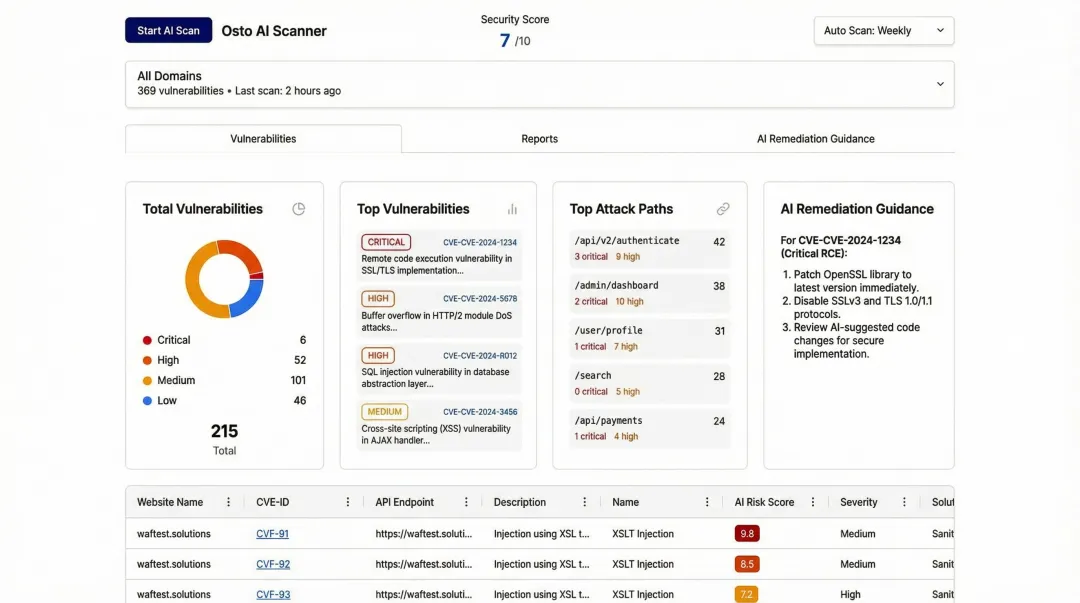

AI handles vulnerability scanning and prioritization in tandem — triaging findings by severity and exploitation likelihood, then triggering predefined playbooks when threats are detected. Security teams address the most critical risks first, and containment happens faster than any human-led response can match.

According to the 2025 IBM Cost of a Data Breach Report, organizations using AI and automation extensively throughout their security operations reduced the breach lifecycle (mean time to identify and contain a breach) by an average of 80 days compared to organizations that did not use these technologies.

For growing businesses, platforms like Osto's AI-powered web vulnerability scanner automatically analyze website security using machine learning algorithms, categorizing vulnerabilities by severity and providing specific remediation guidance with precise location details and step-by-step solutions—enabling rapid response without requiring deep security expertise.

Identity and Access Management

AI enhances IAM by analyzing user behavior patterns to enable adaptive authentication—adjusting access requirements in real time based on risk level. AI also automates role-based access control (RBAC) by discovering identity patterns across the organization, reducing over-permissioning and the associated attack surface.

Cloud and Web Application Security

AI powers two distinct layers of infrastructure protection. In cloud environments, it drives security posture management (CSPM) by automatically discovering misconfigurations across resources — a near-impossible task to perform manually at scale. For web applications, AI-driven adaptive profiling monitors traffic patterns continuously, separating legitimate users from attackers without relying on static rule sets.

Osto's Cloud Security module covers this automatically, running periodic discovery across 35+ resource types on Azure, AWS, and GCP. It surfaces enriched metadata — configuration, networking, identity, and encryption details — and generates findings for misconfigurations, exposure risks, and security-critical issues, so teams can detect and remediate problems across their entire cloud footprint without manual audits.

Core Principles of AI Security

Effective AI security goes beyond technical controls. It requires governance structures and ethical commitments baked into how AI systems are built, deployed, and monitored. Three principles form the foundation.

1. Data Governance and Quality

AI is only as reliable as its training data. Formal data governance processes should ensure training data is accurate, diverse, regularly updated, and protected from tampering. Poor data quality is the root cause of most AI model vulnerabilities.

Defending against data poisoning starts at the pipeline level. This means:

- Validating inputs at ingestion to catch anomalies early

- Auditing training datasets on a defined schedule

- Logging all data changes with clear ownership and timestamps

2. Transparency and Accountability

Document AI algorithms, data sources, and decision-making logic so security teams can explain AI-driven decisions to stakeholders and auditors. This also means maintaining visibility into how AI tools are used across the organization — including shadow AI.

Only 34% of organizations that claim AI governance policies actually perform regular audits for unsanctioned AI use. That gap is where blind spots turn into breaches.

3. Apply Security Controls to AI Systems

AI tools need the same protections as any other critical system: encryption, access controls, regular auditing, and red team testing. Treat AI models as high-value targets — because attackers already do.

This includes monitoring for model drift and decay, which can degrade performance silently over time. Among organizations that suffered AI-related breaches, 97% lacked proper AI access controls. That's a failure of prioritization, not technology.

Benefits of AI Security

The concrete, measurable advantages of AI security are especially relevant for growing businesses and startups that cannot afford large security teams or slow incident response.

Faster Detection and Response

AI analyzes millions of events in real time and identifies threats in seconds that would take human analysts hours or days to detect. This speed advantage directly reduces the window of exposure and limits damage. For resource-constrained teams, this means getting enterprise-grade detection without needing to staff a 24/7 SOC.

Organizations using AI extensively in security operations save an average of $1.9 million per breach compared to those that do not. That kind of cost difference is hard to ignore — especially when a single breach can derail a scaling business entirely.

Scalability and Continuous Protection

AI security solutions scale with the organization—protecting large, complex, or multi-cloud environments without proportional increases in headcount. For example, Osto's platform delivers continuous monitoring across Azure, AWS, and GCP from a single dashboard — no separate tools, no extra headcount required.

Specific capabilities that support this include:

- Nginx reverse-proxy architecture for fast traffic handling with minimal latency

- Dual-layer SSL encryption that protects end-to-end without touching your existing SSL setup

- AI-powered vulnerability scanning with 2x faster execution and improved detection accuracy

Frequently Asked Questions

What is AI security?

AI security is both the use of AI to strengthen cybersecurity defenses through automated threat detection, behavioral analytics, and incident response, and the practice of protecting AI systems themselves from attacks like data poisoning and adversarial manipulation.

What are the 4 types of AI risk?

The four commonly cited AI risk types are: adversarial attacks (manipulating inputs to fool the model), data poisoning (corrupting training data), model theft or manipulation (targeting the AI model itself), and ethical/compliance risks (bias, privacy violations, and regulatory non-compliance). Each category requires distinct mitigation strategies.

What is the best AI for security?

There is no single "best" AI security tool — the right choice depends on your environment, team size, and risk profile. Evaluate ML algorithm types, integration with existing tools, update frequency, and fit for your infrastructure (multi-cloud, web apps, endpoints).

What is the difference between AI security and traditional cybersecurity?

Traditional cybersecurity relies on rule-based, signature-driven defenses that require manual updates. AI security uses machine learning to detect unknown and evolving threats by analyzing behavioral patterns, enabling proactive, automated defense rather than reactive response.

How are cybercriminals using AI?

Threat actors use AI to automate phishing campaigns, generate convincing deepfakes, accelerate malware development, and exploit purpose-built malicious models with ethical safeguards stripped out. This lowers the cost and skill barrier for launching complex attacks.

What are the key principles of responsible AI security?

The three core principles are maintaining high-quality, governed training data; ensuring transparency and accountability in how AI tools operate; and applying the same security controls to AI systems — access controls, monitoring, and red teaming — as to any other critical infrastructure.