Introduction

The Verizon 2025 Data Breach Investigations Report documents a 180% increase in attackers exploiting vulnerabilities to gain initial access compared to the previous year. Between June 2024 and June 2025 alone, 39,080 new CVE records were published — more than most teams can realistically track.

The alert problem is just as severe. Modern SOC teams face an overwhelming 4,484 alerts daily, with 83% turning out to be false positives. Analysts end up ignoring 67% of all alerts simply due to fatigue.

AI is no longer a future concept in cybersecurity — it's the backbone of modern defense strategies. Organizations using AI and automation extensively reduced their breach lifecycles by 80 days and saved $1.9 million per incident, according to the IBM Cost of a Data Breach Report 2024. That same technology, however, is now in attackers' hands too, enabling smarter, more personalized threats at a scale human analysts can't match alone.

This post covers what AI in cybersecurity means, how it evolved from rule-based systems to generative models, the key defensive use cases, the threat landscape it creates, and what the future holds — particularly for startups and scaling businesses building security programs without large IT teams.

TLDR

- AI detects and responds to threats at machine speed through pattern analysis and behavioral monitoring

- Defensive use cases include automated threat detection, phishing prevention, vulnerability scanning, and incident response

- Attackers weaponize AI for highly targeted phishing, adaptive malware, and automated exploitation

- AI-driven security reduces false positives, scales with threat volume, and cuts response times that manual processes can't match

- Growing businesses can now access sophisticated AI security capabilities through unified cloud-native platforms — no large IT team required

What Is AI in Cybersecurity and How Did It Evolve?

AI in cybersecurity uses machine learning, deep learning, and intelligent automation to analyze massive volumes of data, identify patterns, and detect threats at machine speed. Rather than relying on static signatures that only flag known threats, AI systems learn from data to recognize novel attack patterns and adapt defenses continuously—at a pace no human analyst can match.

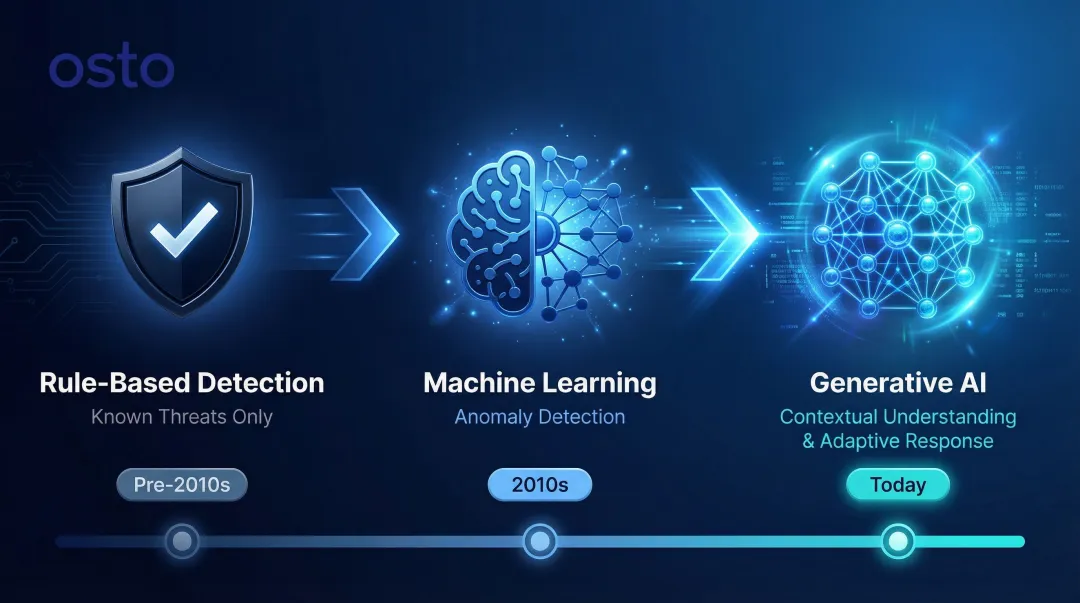

The evolution happened in three distinct phases:

- Rule-based detection — Early systems matched traffic and files against static signatures. Fast and reliable against known threats, useless against anything new.

- Machine learning — The 2010s shift enabled systems to learn from data, detecting anomalies that didn't fit existing patterns.

- Generative AI — Today's large language models and GANs add contextual understanding, synthetic threat simulation, and automated response generation.

The staffing reality makes this shift urgent. ISACA's 2025 State of Cybersecurity Report found that 65% of cybersecurity teams have unfilled positions, and 55% are understaffed.

The ISC2 2025 study adds that 84% of security professionals lack moderate AI/ML knowledge — making it the most pressing skills gap on security teams. When human capital is scarce and attack surfaces keep expanding, AI becomes the most scalable path forward.

Key Use Cases of AI in Cybersecurity

AI is now embedded across the full security lifecycle—from detection to response. Understanding where it's applied helps organizations prioritize the right tools and investments.

Threat Detection and Anomaly Detection

AI uses unsupervised machine learning to establish baselines of normal network behavior and automatically flag deviations—unusual login patterns, abnormal traffic spikes, unexpected data transfers. This contrasts sharply with legacy signature-based detection, which can only catch known threats.

CISA's 2023-2024 Roadmap for Artificial Intelligence explicitly states that CISA actively leverages AI tools for threat detection, prevention, and vulnerability assessments. CISA's own SOC uses unsupervised machine learning to process terabytes of daily network log data from the Cyber Analytic and Data System to detect trends, patterns, and anomalies.

The results when AI-based anomaly detection supplements or replaces signature-based systems are measurable. A Forrester Total Economic Impact study on Palo Alto Networks Cortex XSIAM found that AI-driven correlation cut alerts requiring manual tier-1 SOC review by 80%—from 25,000 to just 4,500 per quarter.

Academic research corroborates this: a 2023 comparative study found that hybrid deployments combining signature filtering with ML anomaly detection decreased false positives by 40% while maintaining high detection rates for novel attacks.

Phishing and Social Engineering Defense

AI-powered email security tools analyze content, sender context, linguistic patterns, and metadata to identify phishing attempts—including sophisticated spear-phishing that impersonates executives. AI detects subtle cues like misspelled domains, spoofed addresses, and unusual urgency faster and more accurately than rule-based filters.

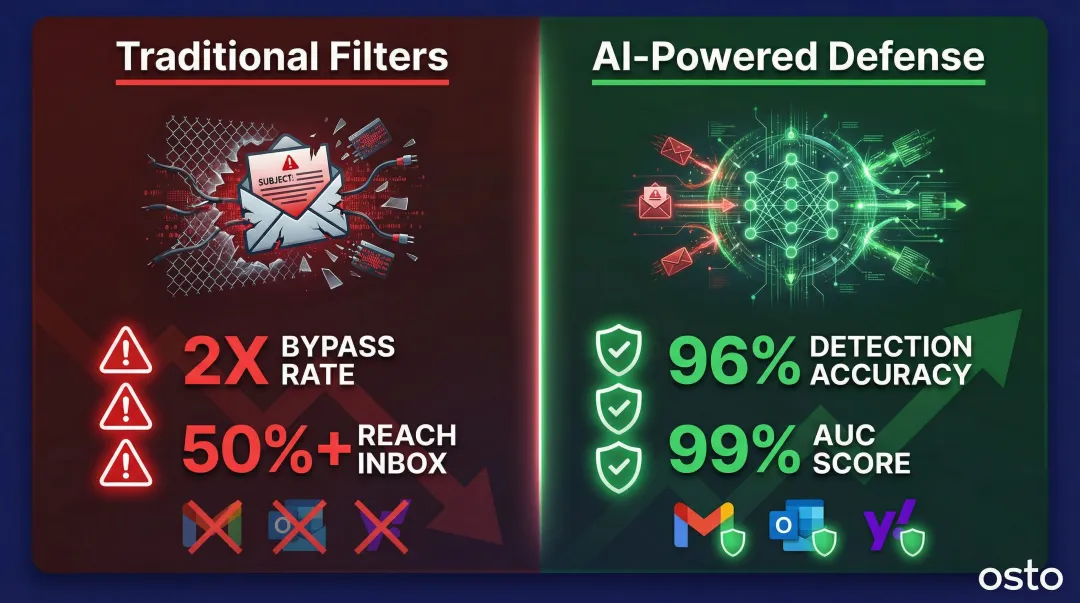

The Anti-Phishing Working Group recorded over 1 million phishing attacks in Q1 2025 alone—the highest quarterly total since late 2023. Rule-based filters aren't keeping pace: AegisAI's 2026 research found that AI-generated phishing emails evade traditional filters at nearly double the rate of human-written attacks, reaching inboxes more than half the time.

AI-powered defenses, however, are closing that gap. A 2025 peer-reviewed study applied 60 stylometric features across machine learning models to detect AI-generated phishing. The XGBoost model achieved 96% accuracy and an AUC score of 99%, successfully identifying threats that bypassed standard Gmail, Outlook, and Yahoo filters.

Vulnerability Management and Web Application Protection

AI continuously scans networks, applications, and cloud environments to identify weaknesses—including zero-day vulnerabilities—before attackers can exploit them. AI-powered scanners improve detection accuracy and reduce false positives by using machine learning to categorize findings by severity and prioritize remediation.

Platforms like Osto apply machine learning to automate this process end-to-end. Key capabilities include:

- Detects OWASP Top 10 vulnerabilities, SQL injection, remote code execution, and HTTP/2 buffer overflow issues

- Categorizes findings by severity so teams address critical risks first

- Provides precise location details, affected endpoints, and step-by-step remediation guidance

This gives growing businesses the same vulnerability detection speed and accuracy that enterprise teams rely on—without requiring a large security operations center. Osto's AI scanner runs on configurable schedules and has been optimized for 2x faster scan execution with improved detection accuracy.

Behavioral Analytics and Identity Verification

AI monitors user and entity behavior across sessions—tracking typing patterns, login times, access requests—and flags anomalies that may indicate compromised accounts or insider threats. This approach connects directly to zero-trust security models where continuous verification is required, not just one-time authentication at login.

By establishing behavioral baselines for each user, AI systems can detect when an authenticated user starts acting outside their normal patterns—accessing unusual files, logging in from unexpected locations, or requesting permissions they've never needed before. That shift from static login checks to continuous behavioral monitoring is what makes zero-trust architectures operationally viable at scale.

Automated Incident Response

Generative AI can automate the initial steps of incident response: categorizing threats by severity, isolating affected systems, generating remediation scripts, and recommending containment strategies. This reduces mean time to respond (MTTR) and reduces pressure on lean security teams.

Rather than requiring human analysts to manually triage every alert, AI handles repetitive, high-volume tasks and escalates only the most critical or ambiguous incidents for human judgment. This allows security teams to focus on complex investigations and strategic decisions rather than drowning in alert fatigue.

How Attackers Are Weaponizing AI

AI doesn't discriminate between defenders and attackers. The same tools that help security teams detect threats faster also give adversaries the ability to launch more convincing, more scalable, and harder-to-detect attacks. Understanding how attackers exploit AI is the first step toward countering it.

AI also enables vishing (voice phishing) through realistic voice cloning and deepfake video to impersonate executives or trusted contacts. The FBI IC3 2024 report warns that criminals use short audio clips to clone voices for financial scams and unauthorized account access. In one documented Business Email Compromise case from February 2024, threat actors cloned executive video and audio to authorize a $25.6 million fraudulent transfer.

AI-Generated Malware and Evasion Tactics

AI helps attackers develop malware that adapts and evolves to evade detection—modifying its own code to bypass antivirus signatures. In 2025, the Google Threat Intelligence Group discovered PROMPTFLUX, an experimental VBScript dropper malware that queries the Gemini API to rewrite its own source code on an hourly basis. This "just-in-time" self-modification allows the malware to constantly alter its signature to evade static detection.

Evasion attacks go further — subtly altering inputs to fool AI-based classifiers, such as making tiny modifications to malware payloads that the model would otherwise flag. Attackers systematically probe AI defenses to identify where detection breaks down, then exploit those gaps at scale.

Adversarial Attacks on AI Systems

Attackers are targeting the AI models used in security tools themselves. The MITRE ATLAS framework catalogs these adversarial machine learning tactics, including prompt injection (crafting malicious inputs to manipulate LLM behavior), data poisoning (corrupting training data to manipulate model behavior or create backdoors), and evasion techniques (crafting input data to prevent AI models from correctly identifying malicious content).

Palo Alto Unit 42 recently observed real-world indirect prompt injection attacks where malicious instructions were hidden within web page HTML using zero font size to trick AI-based ad review systems into approving scam content. Securing the AI pipeline itself is now as important as securing the network perimeter.

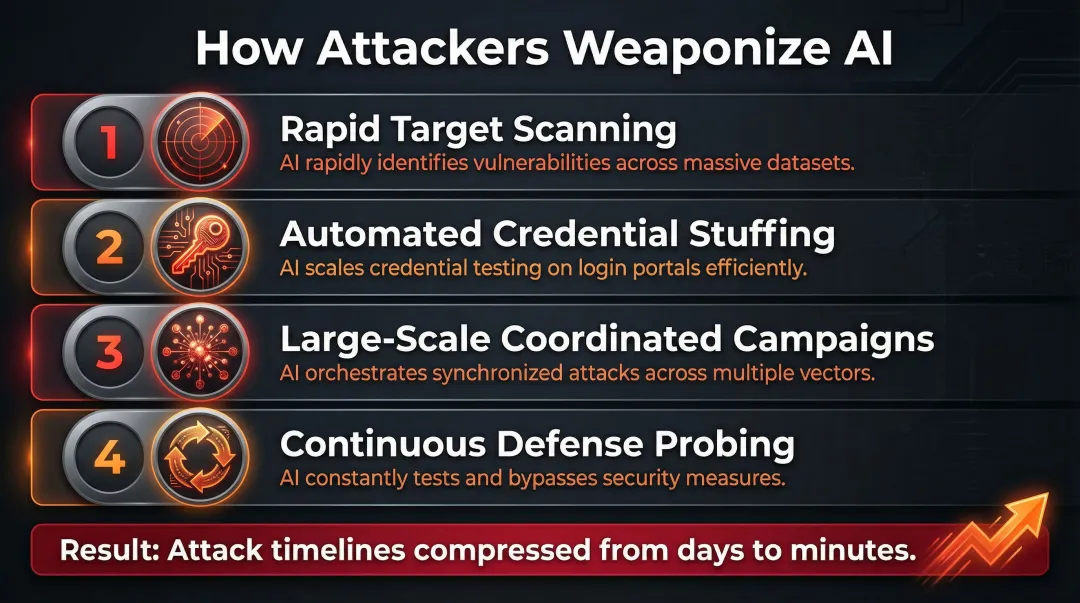

Automated Vulnerability Exploitation and Credential Attacks

AI allows threat actors to compress attack timelines that used to take days into minutes. Common AI-assisted techniques include:

- Rapid target scanning to identify exploitable weaknesses across thousands of systems

- Automated brute-force and credential stuffing with AI-optimized password-guessing

- Large-scale coordinated campaigns launched faster than any human team could execute

- Continuous probing of defenses to adapt attacks in real time

The result is a higher volume of attacks, greater sophistication, and significantly less time for defenders to respond.

Benefits and Challenges of AI-Powered Security

Benefits of AI in Cybersecurity

Speed and Scale:

AI processes and correlates massive volumes of security data—logs, network traffic, endpoint signals—in real time, enabling faster threat detection and response than human analysts can achieve manually. The IBM Cost of a Data Breach 2025 report found that security teams using AI and automation extensively shortened their breach lifecycles by 80 days, reducing Mean Time to Identify and Mean Time to Contain. This speed translated directly to financial impact, lowering average breach costs by $1.9 million compared to organizations that did not use these solutions.

A Forrester TEI study on the TEHTRIS XDR AI Platform found that automated response workflows reduced Mean Time to Investigate by 70%. Over 60 days, the platform automatically managed roughly 27 billion security events, requiring only one manual analyst intervention.

Reduced Human Error and Analyst Fatigue:

By automating repetitive tasks—log analysis, alert triage, routine scans—AI frees security teams to focus on complex, high-judgment work and reduces the risk of errors introduced by fatigue or oversight. When analysts face 4,484 alerts daily with an 83% false-positive rate, automation becomes essential to prevent critical threats from slipping through due to alert fatigue.

Continuous and Adaptive Learning:

Unlike static rule sets, AI models improve over time as they process new threat data—meaning defenses evolve alongside the threat landscape without requiring constant manual updates. In practice, this means a model that struggled with a novel phishing variant in January may handle it reliably by March—without a single manual rule update.

Challenges and Limitations

The benefits are real, but AI-powered security comes with trade-offs worth understanding before deployment.

Data Quality and Accuracy:

AI systems require high-quality, large-volume training data. Biased or poisoned data degrades detection effectiveness. While AI dramatically reduces false positives compared to legacy systems, no system is perfect—and missed threats carry serious consequences.

Explainability ("Black Box" Problem):

Many AI models can't show their work. Security teams need to understand why a transaction was flagged—both to validate findings and to satisfy compliance requirements. Without explainability, incident response workflows stall.

Governance and Oversight Gaps:

Implementing AI security tools effectively still requires human oversight, regular audits, and defined governance policies. Smaller teams may lack the internal structure to manage this well. The IBM 2025 report found that 20% of breaches involved "Shadow AI" (unsanctioned employee AI use), which added $670,000 to average breach costs and disproportionately exposed customer PII. 63% of organizations currently have no AI governance policies in place.

The Future of AI in Cybersecurity

The next phase of AI in cybersecurity will be defined by escalating sophistication on both sides. As attack methods grow more advanced, defenses will have to match them. Autonomous security operations — AI-driven SOCs — will handle more of the detection-response cycle with minimal human intervention. Regulations will tighten globally too, with frameworks like the EU AI Act mandating security-by-design requirements for high-risk AI systems.

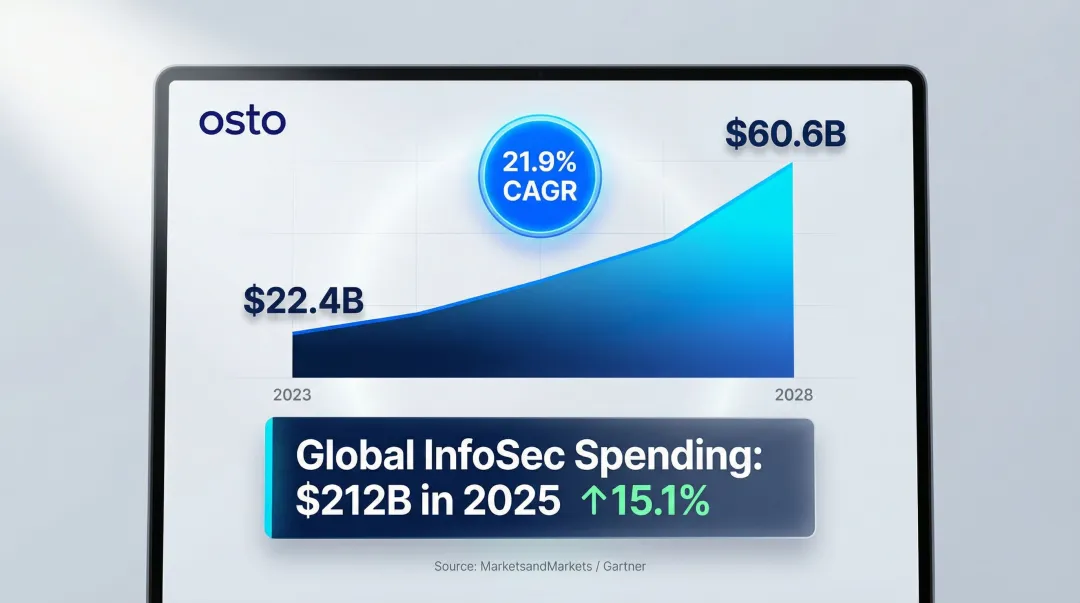

MarketsandMarkets projects the global Artificial Intelligence in Cybersecurity market to grow from $22.4 billion in 2023 to $60.6 billion by 2028, representing a compound annual growth rate of 21.9%. Gartner forecasts that worldwide end-user spending on information security will grow 15.1% to reach $212 billion in 2025, driven heavily by the adoption of GenAI and the need to secure cloud environments.

Several emerging applications will reshape how security teams operate:

- Predictive threat intelligence — anticipating attacks based on pattern analysis and threat actor behavior before incidents occur

- Continuous automated defense testing — AI systems probing for weaknesses on an ongoing basis rather than periodic manual audits

- AI infrastructure security — protecting the AI tools themselves as organizations expose new attack surfaces by deploying them at scale

These shifts are narrowing the gap between enterprise-grade security and what growing businesses can realistically deploy. Cloud-native, consolidated platforms now put serious AI-driven protection within reach of startups and scaling teams — no dedicated SOC required. Platforms like Osto bring AI-driven web protection, multi-cloud posture management across Azure, AWS, and GCP, and Zero Trust Network Access into a single dashboard, giving leaner organizations the same defensive depth that once required entire security departments.

Frequently Asked Questions

How is artificial intelligence used in cyber security?

AI analyzes behavioral patterns to detect threats, filters phishing attempts through content and metadata analysis, and continuously scans for vulnerabilities. These capabilities let security systems identify and respond to threats faster — and at far greater scale — than manual methods allow.

How has AI affected cyber security?

AI has transformed cybersecurity by enabling real-time threat detection, reducing response times from hours to minutes, and improving accuracy through machine learning. However, it has also raised the stakes by giving attackers new tools to launch more sophisticated, automated, and personalized attacks at scale.

What is AI cybersecurity?

AI cybersecurity is the application of machine learning, deep learning, and intelligent automation to detect, prevent, and respond to cyber threats. Unlike static rule-based systems, AI continuously learns and adapts to new attack patterns, improving defenses over time without manual intervention.

What are the biggest risks of using AI in cybersecurity?

Key risks include data poisoning and adversarial attacks targeting AI models, and false positives or false negatives that either overwhelm teams or let threats slip through. Deploying AI without proper governance can also introduce new vulnerabilities or create compliance gaps.

Can AI replace human cybersecurity experts?

AI augments human experts rather than replacing them. It handles repetitive tasks like log analysis and pattern recognition, while human judgment remains essential for complex investigations, strategic decisions, and ethical oversight.

How can small and growing businesses benefit from AI-powered cybersecurity?

AI-powered platforms give growing businesses automated threat detection, vulnerability scanning, and cloud security posture management — without needing a large security team. Unified dashboards consolidate these functions into one place, making robust protection practical and scalable as the business grows.