Lovable is one of the most popular AI coding platforms for founders and indie developers. You describe what you want to build, Lovable writes the code, connects it to a Supabase database, and has a working app running in minutes. No backend engineering required. No DevOps overhead. Ship fast.

In April 2026, a security researcher disclosed that any free Lovable account could read another user’s source code, database credentials, AI chat history, and customer data. Every project built on the platform before November 2025 was exposed.

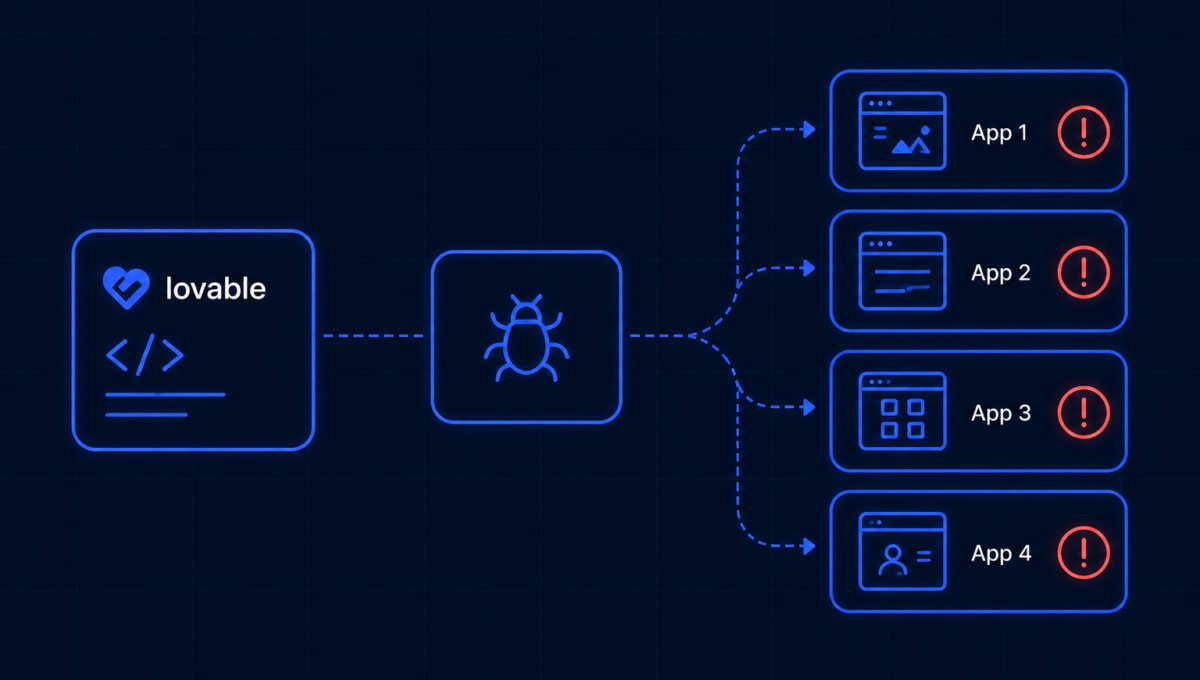

The attacker did not need to exploit a vulnerability in your code. Lovable wrote the vulnerability into your code for you. Thousands of developers shipped it to production without knowing it was there.

What Lovable does and why this matters

Lovable generates full-stack applications from natural language prompts. The frontend runs in the browser. The backend uses Supabase, a platform that exposes a PostgreSQL database directly through a public API. This is a legitimate, powerful architecture. Supabase is used by thousands of production applications.

The critical detail is how Supabase controls access to database tables: through a feature called Row Level Security (RLS). RLS policies define which users can see which rows. Without them, any caller holding the public API key — which is embedded in every Supabase client application and designed to be public — can read every row in every table.

Lovable’s generated code called Supabase directly from the browser using the public anon key, without attaching the RLS policies that restrict what that key can access. The database was open to anyone who found the endpoint.

The numbers

That is roughly one in ten of the apps scanned. Each vulnerable app had at least one endpoint where an unauthenticated attacker could dump entire database tables without logging in. The exposed data found across these apps included user emails, home addresses, personal debt amounts, payment records, and API keys from connected services.

The timeline that should concern every platform user

Why this is not a Lovable problem. It is an industry problem.

Lovable is the most visible example of a pattern happening across every AI coding platform. The Cloud Security Alliance tracked at least 20 documented security incidents exposing tens of millions of users across AI-powered applications between January 2025 and February 2026. Nearly every incident traced back to the same preventable root causes: missing authorization, exposed secrets in client code, and weak multi-tenant isolation.

When a platform embeds an insecure default, every application built on that platform inherits the vulnerability. The developer who shipped the app had no idea. The users whose data was exposed had no idea. The only people who knew were the researchers who went looking and the attackers who didn’t announce themselves.

Enterprise AI adoption grew 187% between 2023 and 2025. AI coding tools in particular have compressed the time between “idea” and “live production app with real user data” from months to hours. The security review process has not compressed at the same rate.

If you have built anything on Lovable, do this now

-

!

Rotate every Supabase anon key and service role key used in any Lovable project immediately. Treat them as compromised. -

!

Checkpg_policiesin your Supabase database and verify that every table that holds user data has an RLS policy that actually restricts access — not just a policy that exists. -

✓

Test your RLS policies from the client SDK, not from the Supabase SQL Editor. The SQL Editor bypasses RLS entirely and will show you all data regardless of what your policies say. -

✓

Never store sensitive credentials, API keys, or PII in any Lovable project’s source code or chat history. They are part of the exposure surface. -

✓

If your app handles real user data, treat it like any other production vendor: review it against your SOC 2 or security framework controls before it goes live with customer data.

The broader lesson

AI coding tools are genuinely useful. They compress development time, lower the barrier to building, and let small teams ship products that would have required much larger engineering teams a few years ago.

But the AI writes code the way a developer who has never been paged at 2am about a data breach writes code. It optimises for the thing working, not for the thing being secure. The developer who reviews the generated code may not catch what the AI missed. The security review that would catch it is the step that gets skipped because the whole point of the tool was to move fast.

The Lovable breach is the logical outcome of shipping AI-generated code handling real user data without an independent security review. It is the third time on the same platform in thirteen months. The pattern is not going to stop on its own.

Every app you ship with real user data needs a security review that the AI coding tool cannot do for itself.

Osto runs penetration tests and source code assessments on AI-generated and traditionally built applications alike, identifying the authorization gaps, exposed credentials, and misconfigured access controls that ship silently into production. If you are building on Lovable, Cursor, or any AI coding platform and handling real customer data, a VAPT scoped to your application is the check that tells you whether what the AI wrote is actually safe to ship.

The Lovable breach took thirteen months and three incidents to surface publicly. Your users’ data does not have thirteen months to wait.